This website uses cookies so that we can provide you with the best user experience possible. Cookie information is stored in your browser and performs functions such as recognising you when you return to our website and helping our team to understand which sections of the website you find most interesting and useful.

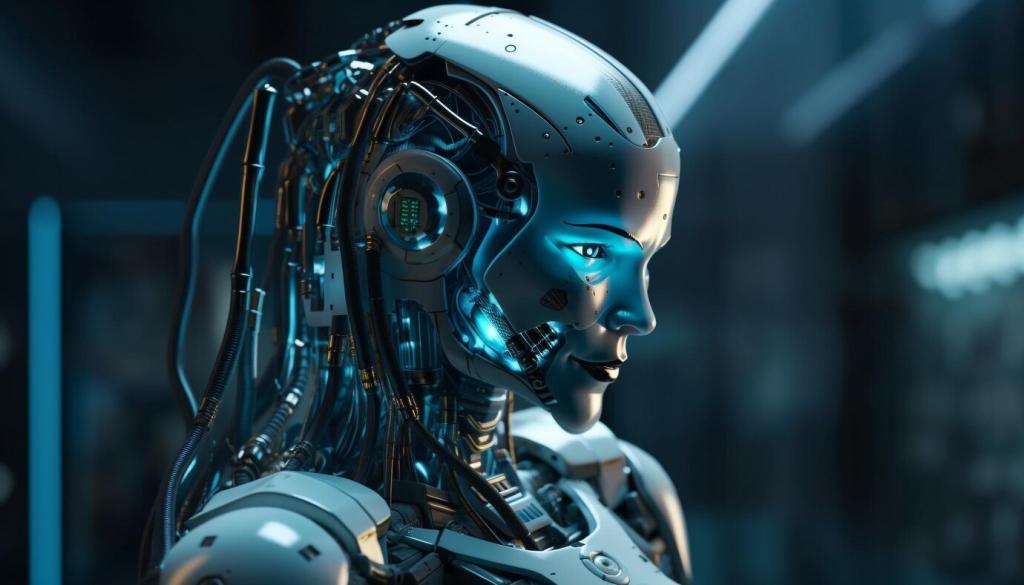

Human-Centric AI: Shaping the Future of Innovation

Human-Centric AI represents a transformative approach to artificial intelligence, ensuring that technology enhances human potential, well-being, and creativity. This philosophy prioritizes ethical considerations, meaningful collaboration, and inclusive development, with humanity’s needs firmly at the core of every AI endeavor. As industries and societies increasingly integrate intelligent systems, adopting a human-centric vision is more crucial than ever. It not only fosters trust and transparency but also drives meaningful innovation that serves everyone. Through this perspective, we can unlock AI’s full potential while upholding the values and priorities that enrich human life. Explore how human-centric AI shapes the future by fostering responsible, innovative, and people-focused transformations across sectors.

Understanding Human-Centric AI

Defining the Concept

Historical Context

Key Principles

The Ethical Imperative

Addressing Bias

Ensuring Accountability

Promoting Transparency

Enhancing Human Potential

Bridging the Digital Divide

Representation in AI Development

Addressing Accessibility

Balancing Progress and Risk

Continuous Evaluation

Multi-Stakeholder Collaboration

Healthcare Transformation

Personalized Education